5min read

Due to the complexity of Swarmchestrate’s application-centric orchestration approach, a Cloud-to-Edge simulation environment is being developed in parallel with the actual system implementation. The goal of this simulation environment is to allow for safe and repeatable experimentation through mimicking the behaviour of the real system, as realistically as possible. For this purpose, we are extending the open-source DISSECT-CF-Fog simulator which provides a flexible, modular, and energy-aware simulation framework that is well suited for modelling complex edge, fog, and cloud computing scenarios.

The rationale behind this decision is twofold: first, the simulation environment enables end-to-end modelling of orchestration services, including application submission, resource allocation, deployment, and runtime management, by mirroring the behaviour of a complete Swarmchestrate ecosystem. Second, DISSECT-CF-Fog is further extended towards the concept of a Digital Twin, acting as a dynamic virtual replica of the real Swarmchestrate infrastructure and demonstrators’ applications, parallel to the execution of the live system. This allows us to predict system behaviour, rigorously test orchestration strategies, and optimise resource allocation in a controlled, risk-free environment.

In this article, we will focus on the “Urban Noise Classification” demonstrator managed by InnoRenew CoE, UP IAM, as a detailed example of using the simulation environment. The challenge addressed by this demonstrator is how Swarmchestrate can enable scalable, real-time urban noise monitoring by dynamically orchestrating lightweight sound classification workloads across distributed edge nodes. In particular, the system must be able to intelligently reroute tasks away from devices exposed to direct sunlight, or approaching thermal throttling limits, towards cooler, nearby edge nodes.

To improve application execution in such scenarios, we move from a reactive, rule-based orchestration strategy to a more sophisticated, proactive approach. This approach is based on a decision-making logic supported by deep learning, specifically Transformer-based time-series forecasting, which predicts when an edge node is likely to exceed a predefined CPU temperature threshold. Based on these predictions, the system continuously assesses upcoming thermal risks by analysing the expected temperature evolution over a future time window. Edge nodes are dynamically classified into safe or thermally critical states before actual throttling limits are reached. When an application component is running on a node predicted to become thermally constrained, the orchestration layer proactively starts a new instance on a safer node, selected based on the lowest predicted temperature. Application instances are only terminated when the overall load is low and no thermal risks are anticipated. To ensure system stability, a cooldown mechanism is applied to prevent frequent start-stop oscillations and unnecessary reconfiguration.

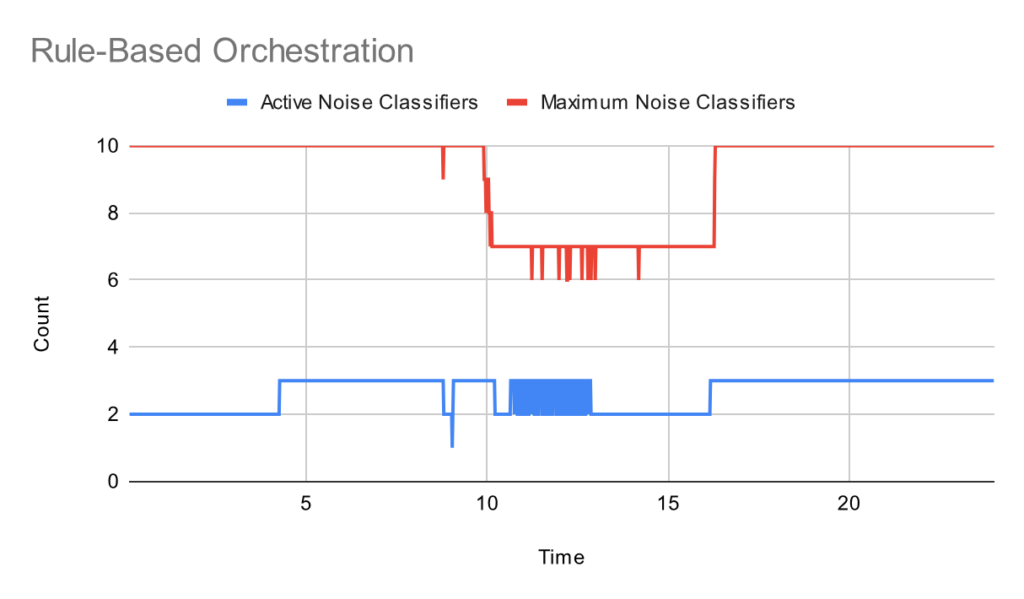

Results when employing reactive, rule-based orchestration (© Swarmchestrate consortium 2024-2026)

One of the key challenges addressed by the simulation environment is the complex interaction of factors influencing edge device temperature. CPU temperature is affected by multiple dynamic parameters, including environmental conditions such as solar radiation, as well as the current CPU load, which in turn depends on the frequency and intensity of sound events triggering the classification pipelines. In our model we explicitly consider several real-world factors, including:

- active cooling mechanisms of Raspberry Pi devices;

- sun intensity;

- device placement (indoor or outdoor); and

- direct sun exposure.

The thermal behaviour of devices is modelled using Newton’s law of cooling, which states that the rate of change of an object’s temperature is proportional to the difference between its own temperature and that of the surrounding environment. This physical model allows us to capture realistic temperature dynamics within the simulation.

To evaluate the effectiveness of the orchestration strategies, we monitor several KPIs, including the percentage of time edge devices remain below the CPU throttling temperature threshold, and the end-to-end application latency. The extended simulation environment helps us better understand system behaviour under varying environmental and workload conditions. More importantly, it supports the fine-tuning of the orchestration algorithms that can reduce unnecessary container migrations, lower network utilisation, decrease end-to-end latency, and minimise the time edge devices operate close to critical thermal limits.

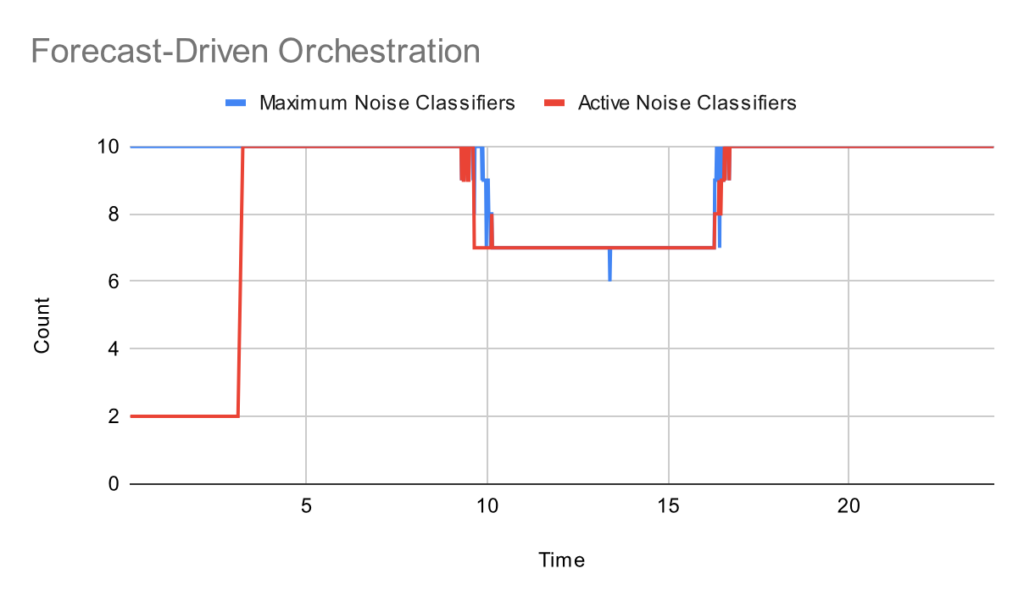

To evaluate the behaviour of the system under realistic conditions, we define a representative simulation scenario that captures the most relevant characteristics of an urban edge environment. The experiments simulate a full day of operation with 10 noise sensors deployed across heterogeneous edge nodes, placed in both indoor and outdoor locations and exposed to varying environmental conditions. Noise levels are sampled at 10-second intervals, and classification tasks are triggered when the measured sound intensity exceeds a predefined threshold of 70 dB.The results indicate that Forecast-driven Orchestration makes more efficient use of the available edge nodes by proactively distributing workloads based on predicted thermal behaviour. This leads to a higher number of active classifier containers without pushing devices close to their thermal limits.

Results when employing proactive, forecast-based orchestration (© Swarmchestrate consortium 2024-2026)

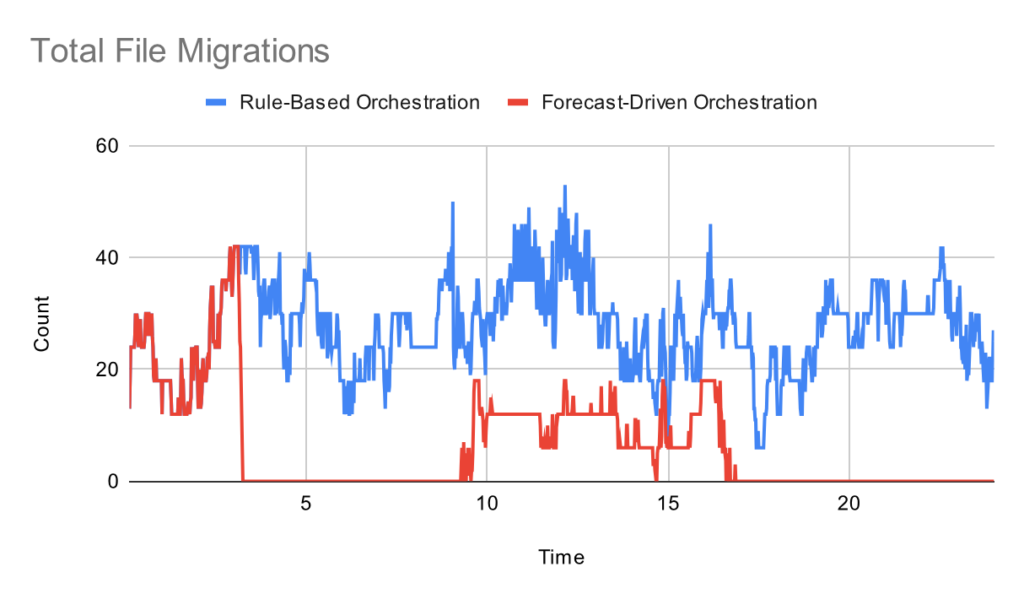

As a consequence, fewer file migrations are required, which directly reduces network utilisation and end-to-end latency. Importantly, running additional classifier containers does not result in a significant increase in CPU temperature, as the workload is more evenly distributed across the edge infrastructure.

Total file migrations when using rule-based vs forecast-based orchestration (© Swarmchestrate consortium 2024-2026)

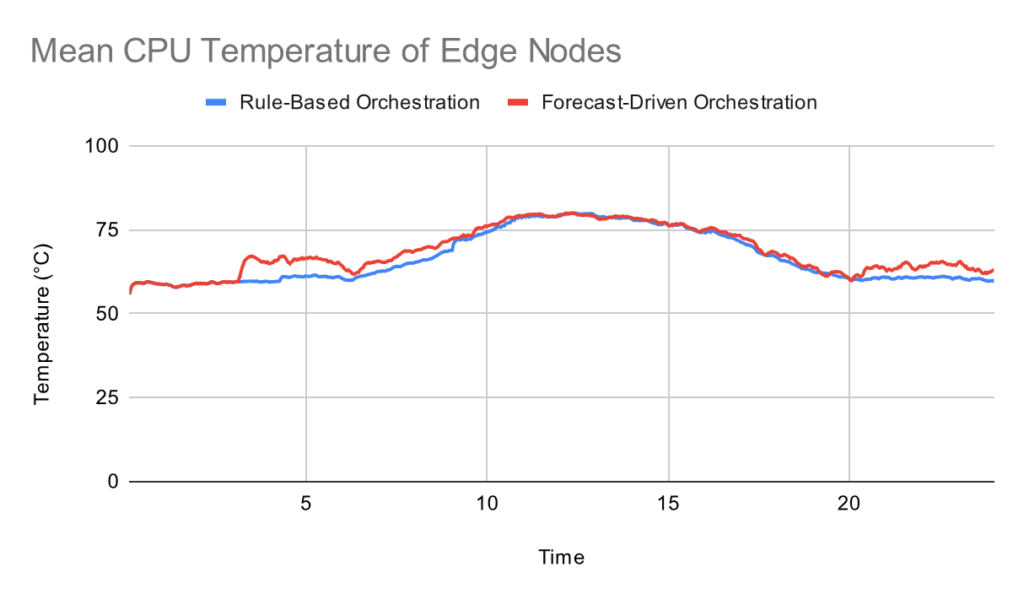

Despite running a higher number of classifier containers, the mean CPU temperature of edge nodes does not increase significantly under Forecast-driven Orchestration. This indicates that proactive classifier placement does not significantly degrade this previously defined KPI, namely the percentage of time edge devices remain below the CPU throttling threshold.

CPU temperature when using rule-based vs forecast-based orchestration (© Swarmchestrate consortium 2024-2026)

Overall, the presented simulation-based approach demonstrates how forecasting-aware orchestration can improve resource utilisation and system stability in dynamic edge environments. These results provide early insights into expected system behaviour and guide further refinement before deployment in live environments.

Editors: Andras Markus and Attila Kertesz, FrontEndART Software Ltd.